Oracle’s AI push is more than a product evolution story. It is a decision point for CIOs who depend on the firm to run core processes across finance, HR, supply chain, and industry operations. The CIO’s question is no longer whether Oracle can innovate fast enough. It is whether the operating model, governance structures, and GSI relationships built to support it can absorb that innovation without creating new layers of risk and long-term dependency.

CIOs who apply the Services-as-Software™ playbook before their next Oracle renewal will pull ahead. Those who don’t will pay to close the gap later at full price.

What Oracle wants you to hear is “more AI embedded into enterprise workflows should improve productivity, decision making, automation, and user experience.” For many customers, that sounds like the next logical phase of enterprise resource planning (ERP) and enterprise platform modernization. But the deeper enterprise reality is more complicated. What looks like Oracle doing more of the work is actually the firm accelerating how fast the hard work arrives at your doorstep.

When AI becomes native to the platform, the burden of transformation does not disappear but just shifts. The platform may simplify or absorb some of the technical work, but the hardest work becomes more enterprise specific. This involves ensuring data readiness, policy consistency, process redesign, governance, human oversight, exception handling, and business accountability, elevating the demands on the operating model discipline.

Adopting Oracle AI shifts the “buying software capability” decision to an “enterprise change” decision. Poor adoption of a new feature set is no longer the major risk. The greater risk is that embedded AI gets layered into broken processes, fragmented data, unclear decision rights, and overextended transformation programs. When that happens, enterprises do not get productivity. They get higher complexity at greater speed.

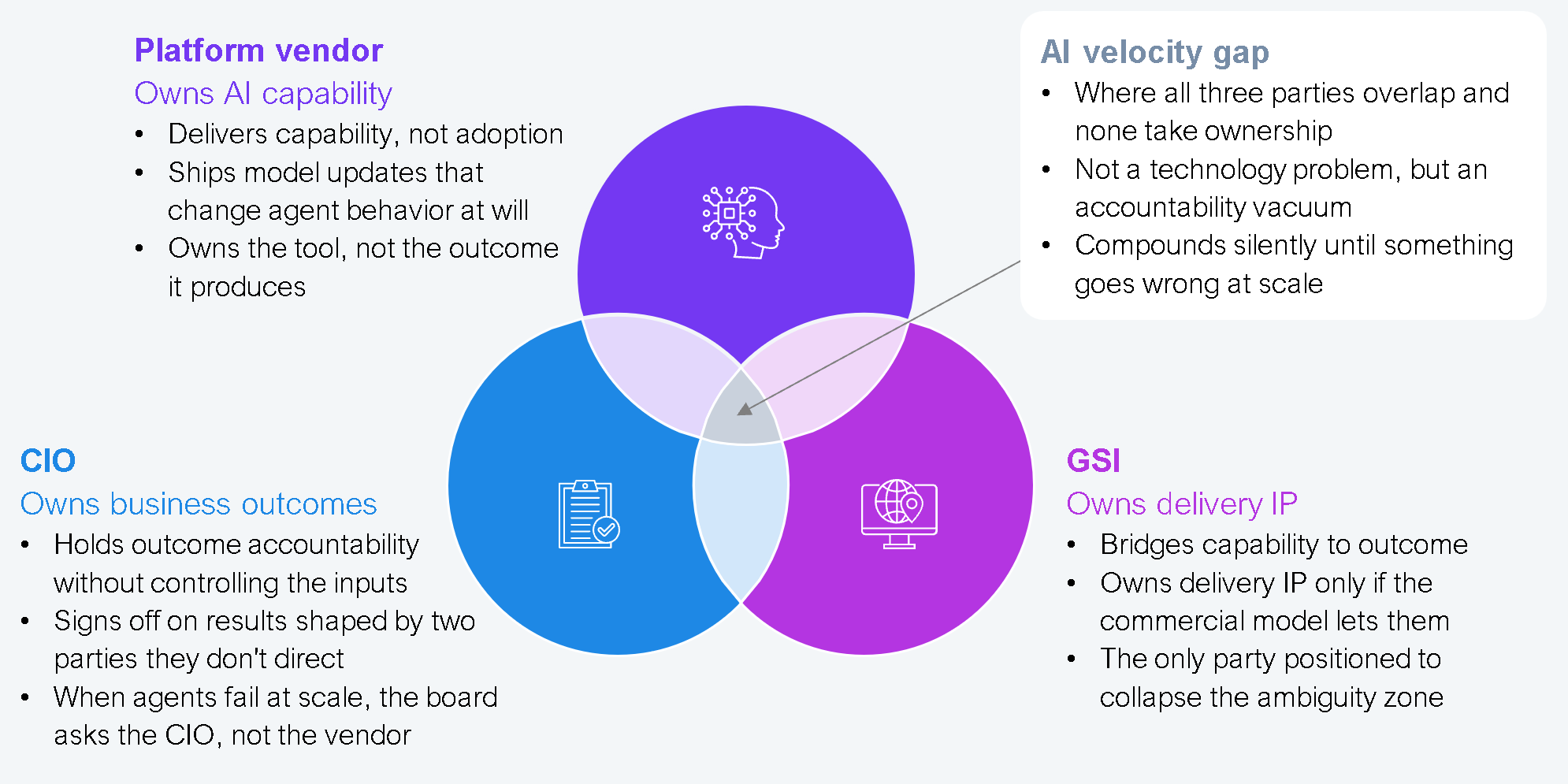

HFS calls this the “AI velocity gap”: the growing divergence between how fast AI capability is deployed and how fast organizations can absorb, govern, and trust it. If Oracle owns the platform, the GSI owns delivery, and the CIO owns the business outcome, then any ambiguity between those layers creates execution drag and blame-shifting (see Exhibit 1).

Source: HFS Research, 2026

For years, the Oracle services model was built around partner selection based on brand, geographic reach, certification counts, and the size of the delivery bench. Although AI compresses the distance between deployment and business consequences, enterprises often end up coordinating the gaps themselves, absorbing risk that was never theirs to own.

This is why the traditional services playbook becomes unviable as enterprises need more than a generic implementation arm. They need partners that can absorb transformation complexity without multiplying it. That means the buying lens must shift from “who can deploy Oracle fastest?” to “who can make Oracle AI work inside my operating model without increasing risk, cost, or long-term dependence?”

That is a much higher bar. The partners that clear it will be selling codified intelligence rather than capacity. Their domain knowledge is embedded in prebuilt workflows, orchestration models, and reusable AI assets built specifically on Oracle’s platform. This is the shift from services to Services-as-Software, where the GSI’s value is no longer in the size of its certified resources but in the depth of its IP. For CIOs, that distinction matters enormously as it determines whether the transformation cost is a one-time investment or a recurring dependency.

CIOs who are getting this right are changing how they buy from a procurement exercise to a governance design exercise. The objective is not to find the biggest Oracle partner, but to find one that operates on the Services-as-Software model of pre-built, accountable, and outcome-oriented solutions. Before any Oracle AI engagement moves forward, mutual accountabilities across the CIO, GSI, and business must be locked as seven shared commitments:

CIOs who apply the six-part playbook above hold their GSI to the Services-as-Software standard and close the AI velocity gap before go-live will convert Oracle’s momentum into measurable business value. Those who treat this as a platform upgrade will pay for that assumption twice.

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

With the exception of our Horizons reports, most of our research is available for free on our website. Sign up for a free account and start realizing the power of insights now.

Our premium subscription gives enterprise clients access to our complete library of proprietary research, direct access to our industry analysts, and other benefits.

Contact us at [email protected] for more information on premium access.

If you are looking for help getting in touch with someone from HFS, please click the chat button to the bottom right of your screen to start a conversation with a member of our team.