Agentic AI is ready, but regulatory frameworks and policies aren’t, posing a velocity gap in enterprise AI adoption. As CTOs chase product innovation, regulatory requirements are turning into a bottleneck. HFS Research finds that 83% of enterprises are stuck in early-stage AI experimentation, while the regulatory picture is far gloomier.

Piyush Jain, Deputy CTO at L&T Technology Services (LTTS), outlined the steps that LTTS has taken to plug that enterprise-regulator adoption gap while accelerating innovations in agentic AI.

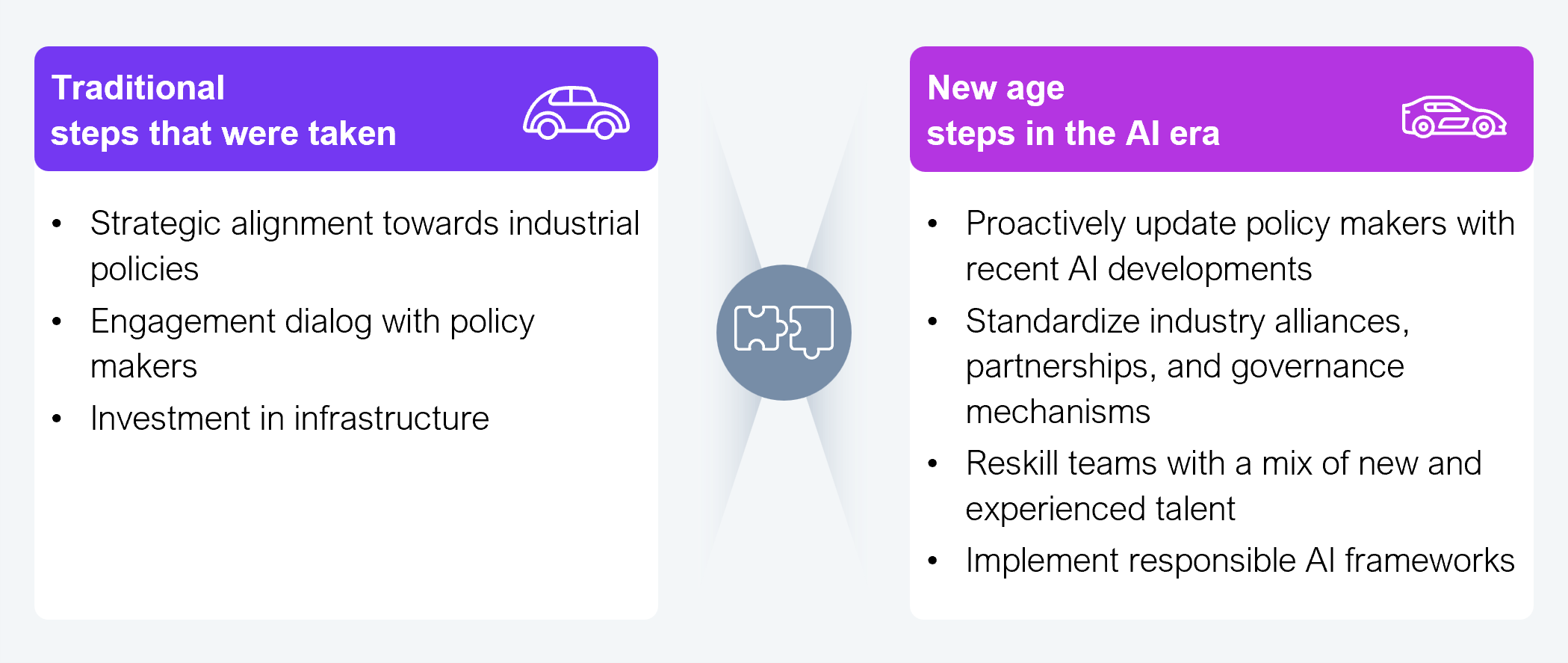

The automotive industry illustrates this regulatory challenge while drawing a fine balance between innovation and safety or performance. In the US, the National Highway Traffic Safety Administration (NHTSA) serves as the primary regulator, albeit from a safety perspective alone. In the European Union, Tesla’s full self-driving feature has been facing regulatory delays due to safety concerns. Historically, many firms have responded by aligning their strategy toward industrial policies, engaging with policymakers through dialog, and investing in infrastructure.

However, these steps are no longer sufficient, and firms must now take a more proactive posture. This involves updating policymakers with recent AI developments; standardizing industry alliances, partnerships, and governance mechanisms; reskilling their teams; and implementing responsible AI frameworks to address the velocity gap (see Exhibit 1). Without such measures, regulatory friction will continue to slow down AI deployment, and enterprises will miss out on the full benefits of agentic AI such as faster product development and new revenue streams.

Source: HFS Research, 2026

Delayed regulatory approvals, stringent quality checks, and the high cost of failed innovations are constraining the deployment of advanced AI technologies. This is particularly pronounced in core product design, engineering, and manufacturing due to the operational complexity, fragmentation, and diverse standards in this domain. AI deployment doesn’t cover the end-to-end product lifecycle, but just parts of the workflow. Tools such as large and small language models (LLMs, SLMs) and retrieval-augmented generation (RAG) produce output that’s probabilistic in nature and act as “black boxes.” Repeatability of output such as product designs and codes can be an issue unlike deterministic models. This raises regulatory concerns around product usage, safety, reliability, and performance.

LTTS aims to address these constraints by adopting a human-in-the-loop model for gate reviews and quality checks, optimizing team members’ bandwidth and time spent. In parallel, it uses a responsible AI framework to avoid bias and ensure full traceability of decisions and output across the product lifecycle.

Employees are central to the development of trusted, responsible AI. LTTS finds fresh graduates AI-ready as they’ve been exposed to several tools and approaches in their earlier days. In contrast, experienced employees should unlearn traditional ways of working and adapt to new ways. For effective AI implementations, teams must be a mix of fresh graduates and experienced veterans. Such cross-pollination of ideas and knowledge ensures that implementations are both cutting edge and reliable.

Not all employees have to understand how algorithms are created or models are built. They should just be comfortable using existing models and tools such as LLMs, SLMs, traditional AI/ML, and RAG. Training on prompt engineering can improve their productivity, keep cost under control, and help them make informed decisions such as whether a task really requires a GPU or can be just handled by a CPU.

HFS’ visit to LTTS’ labs gave first-hand insights into how forward-looking firms are operationalizing this shift. Cross-functional, technology-oriented teams are integrating advanced AI tools in automotive workflows to enable different levels of autonomy and offer vehicle features on a subscription basis. Cars are becoming more complex, making affordability an issue. Customers can go for a basic model and enable advanced features as needed, paying only for what they use.

To adapt governance mechanisms and standards for advanced AI, CTOs must form consortiums (such as the IoT consortium) for interoperability across diverse solution providers. They should also differentiate their own offerings within the agreed-upon mechanisms and standards. Those waiting for regulations to be defined before adopting emerging technologies risk losing business against competitors that proactively address roadblocks.

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

Register now for immediate access of HFS' research, data and forward looking trends.

Get Started