As users and leaders abdicate capabilities to AI, they may be in danger of losing what makes them special. To address this, enterprise business and technology leaders must come together to bust out of the black box-landgrab by the frontier model AI giants and reclaim their firms’ intelligence with a new sovereign enterprise AI stack. Fail in this mission, and you’ll enable the likes of OpenAI, Google, and Anthropic to assimilate much of what differentiates your business and share it with rivals.

The rise of new capability layers that enterprises can and should own is helping to define a new sovereign enterprise AI stack. Examples include a multi-modal security in the case of Mighty (HFS’ Highlight: Mighty updates workflow protection to handle multi-modal hacks), reasoning capabilities such as Sanscritic (HFS’ LinkedIn post), and Levangie Labs (HFS’ blogpost).

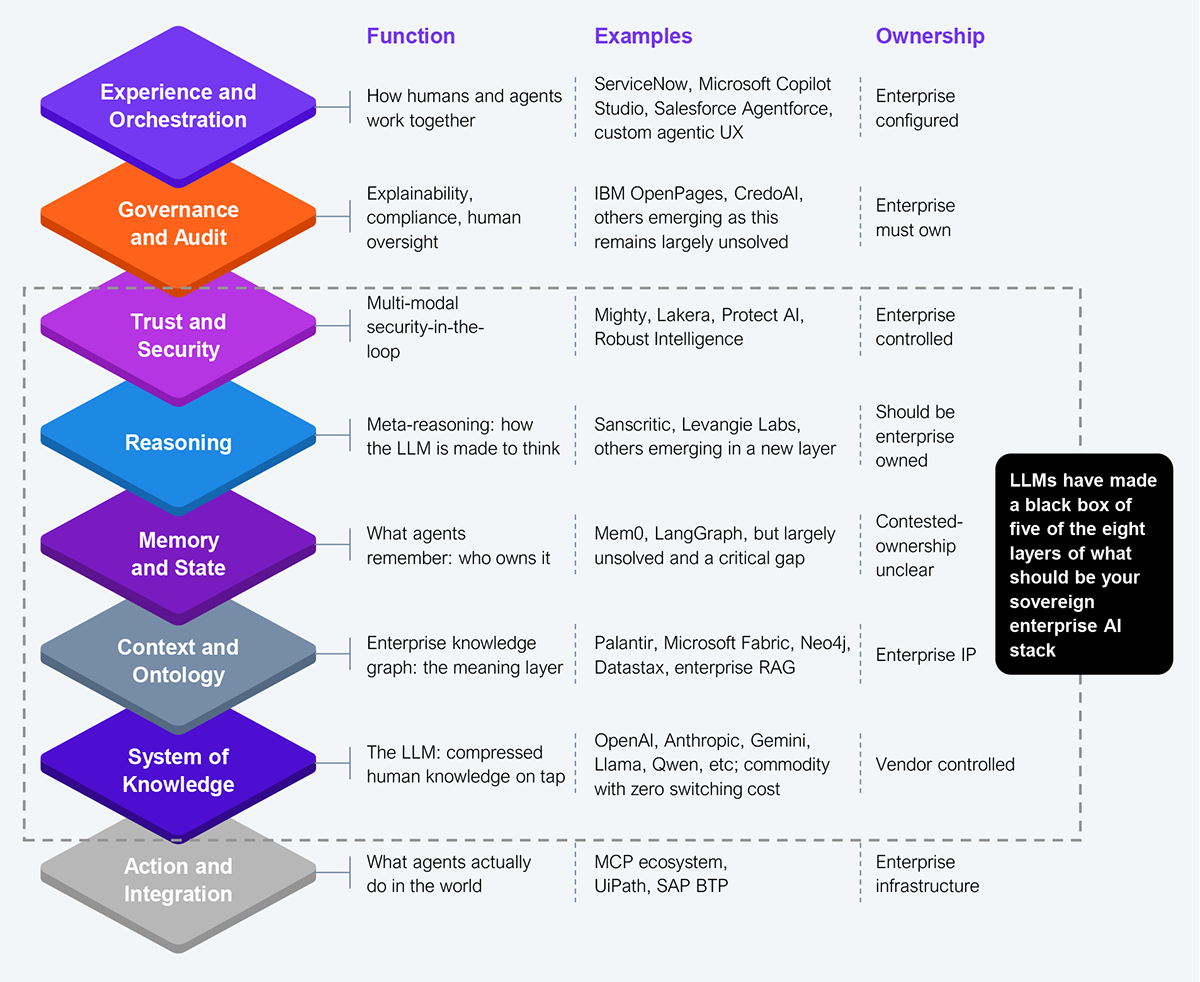

These developments suggest a model where LLMs must be relied upon solely as your System of Knowledge: a source for enterprise-owned layers of capability and differentiation drawn from LLMs’ concentrated font of human knowledge and one that does not define how you do business (see Exhibit 1).

Source: HFS Research, 2026

LLMs are becoming the new monoliths controlling not only the knowledge, but also how reasoning is conducted (how to think about that knowledge), as well as your security, and increasingly the context for all your AI activities.

Startups such as Sanscritic separate out Layer 5 (see Exhibit 1). Sanscritic is a reasoning OS, a model-agnostic universal reasoning layer, and one you can own rather than license from a foundation model vendor sucking up your intellectual property.

Mighty offers a similar decoupling from the LLM providers, and this time in the Layer 6 realm of security and trust. By making its management of multi-modal attacks a layer you can own, you standardize protection regardless of the underlying model.

Palantir has long been giving enterprises control of their own context through Layer 6. Its approach is to build your own ontology: a semantic and operational layer on top of integrated enterprise date, models, and systems to map business entities, relationships, and workflows into objects and link actions. It is kind of the organization’s digital twin that includes business logic, permissions, provenance, and executable actions.

Leaders that take control of these layers can build their own sovereign AI stack. Such a stack reclaims your firm’s intelligence, claims ownership, and allows you to retain control over your AI destiny.

When you build your enterprise’s knowledge future (its IP) on a third-party engine (the LLM), you are making everyone, including your competitors, better. But will this rising tide really raise all boats? And do you want that to happen? Each separated and enterprise-controlled layer you apply will stop you from capturing what makes your organization unique. Your reasoning improvements stay in your reasoning layer. Your ontology stays in your knowledge graph.

HFS believes that the enterprise need for control is increasing just as LLMs head toward commodity. Harken back to when databases were proprietary and differentiated (e.g., Oracle), yet now leading solutions (e.g., PostgreSQL) are free. We expect the same to happen to LLMs. Inference cost is collapsing and open-source models are closing the gap. The frontier models know this too, which can explain their interest in ‘owning’ as much of the enterprise stack as possible. Contrarily, an enterprise that owns its own stack and the layers in between maintains its competitive advantage.

The System of Knowledge layer (the LLM) can remain vendor owned, but all other layers must be enterprise controlled. The most contested (see Exhibit 1) is Layer 4: Memory and State, what should agents remember and who owns it? This is the realm of episodic memory across sessions, workflow continuity, agent state, and institutional memory of past decisions. We believe that even here, what an agent learns from enterprise interactions should stay inside the firm’s realms of control. Both technology and business leaders must at least have the option to choose what they wish to share with a vendor.

The enterprise AI stack is being reconfigured to provide firms with the option of greater sovereign control. Business and IT leaders must come together to prioritize that opportunity. The LLM becomes the System of Knowledge: vast, increasingly cheap, swappable, and no more differentiating than a relational database.

Differentiation stacks up from that System of Knowledge in layers the enterprise can own. Enterprises that start assembling their sovereign stack now will be the ones setting the terms later. Those that wait for their preferred frontier model LLM to provide all these layers in one bundle will simply hand their intelligence and IP to vendors.

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

Register now for immediate access of HFS' research, data and forward looking trends.

Get Started