Enterprises still tend to think about AI through the lens of data: if you own your data, you must be safe. That logic is comforting, but increasingly dangerous. As AI becomes embedded in operational workflows, the more important question is no longer where data sits; it is who controls the models and the decision logic acting on it. In many cases, that control sits outside the enterprise.

For CIOs, this creates a clear accountability gap. They remain responsible for outcomes driven by AI, even when the underlying intelligence layer is owned, updated, and governed by external providers. The priority now is to make that dependency visible before it becomes an operational liability.

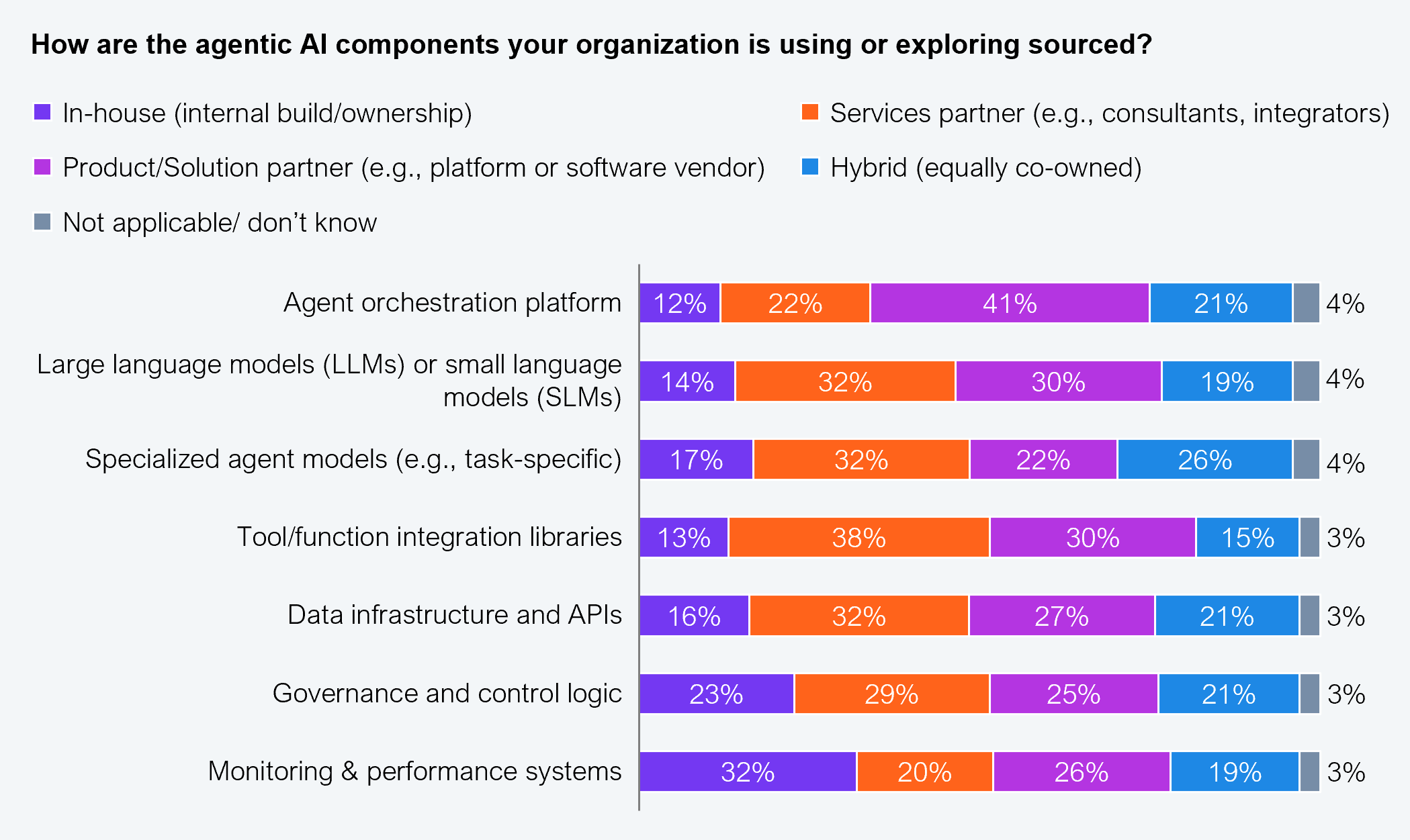

Enterprises are not building AI as a single, coherent system. They are assembling it from layers, models, orchestration platforms, data infrastructure, and governance tools, often sourced from different providers. Our research shows that most organizations rely heavily on external vendors for these components rather than building internally (see Exhibit 1).

Source: HFS Research, 2026

This represents a structural change in how control operates: no longer centralized within the enterprise and now distributed across the stack, often across multiple vendors with different incentives, update cycles, and governance models.

A single AI-enabled workflow may depend on one provider for the model, another for orchestration, and another for infrastructure. Each of these components can evolve independently. Models can be updated, APIs can change, orchestration rules can be modified, and infrastructure constraints can be introduced, all without direct enterprise control.

This dependency on vendors often remains hidden because it sits beneath the application layer. It becomes visible only when something breaks. For instance, during the AWS US-East-1 outage, enterprises did not lose their data. They lost access to the systems that interpret and serve that data. Customer operations stalled, internal workflows failed, and service continuity was disrupted, not because data was compromised, but because control of the execution layer sat elsewhere.

The dependency on vendors becomes more consequential as AI systems move from supporting decisions to taking action. Agentic systems are no longer limited to generating outputs. They initiate workflows, resolve requests, trigger transactions, and interact directly with customers and employees.

At that point, the nature of dependency changes. Enterprises are no longer relying only on external systems to inform decisions, but to execute them. When those systems are externally governed, the impact of failure is no longer contained within the technology layer. It moves directly into operations.

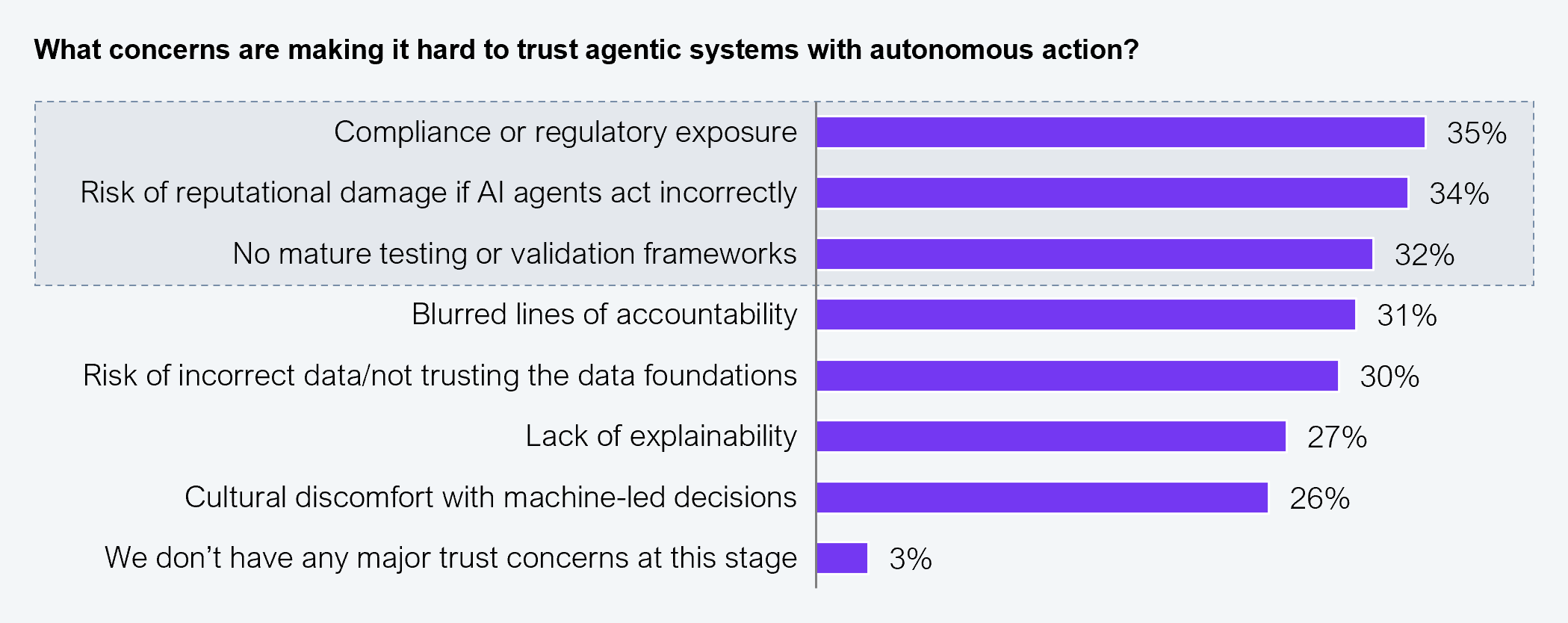

Our research shows that 35% of enterprises cite compliance exposure as their top concern with agentic systems, while 34% point to reputational damage from incorrect AI actions (See Exhibit 2). These concerns reflect how quickly system-level issues can become business-level events.

Source: HFS Research, 2026

For CIOs, the challenge is no longer about just managing AI adoption. It is about understanding who controls the systems that shape decisions and actions across the business. That is where the accountability gap begins and where most enterprises still lack visibility.

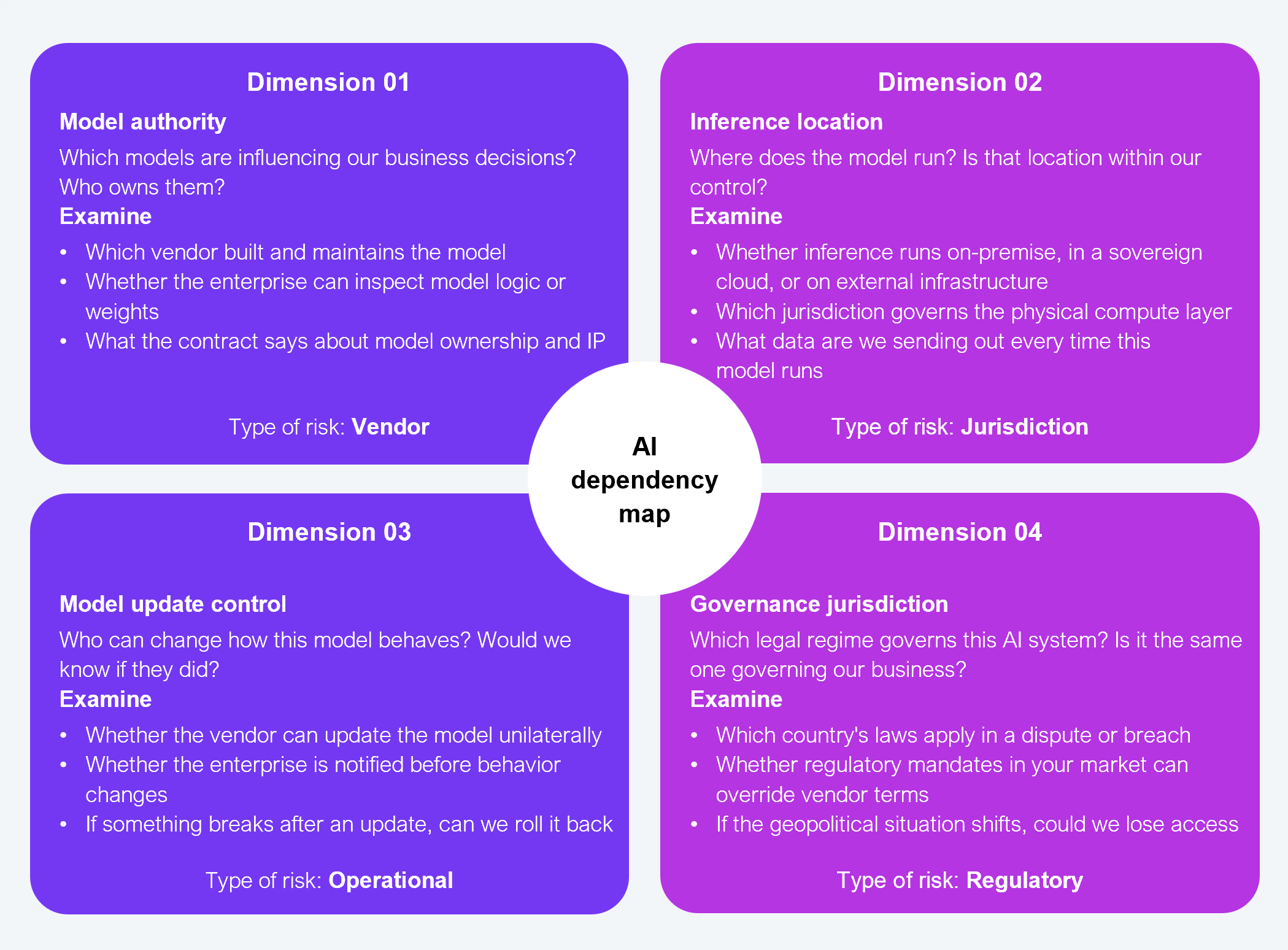

This is where the HFS AI Dependency Map becomes useful (see Exhibit 3). It offers a practical way to make control visible across the AI stack by addressing four concerns: who owns the model, where it runs, who can update it, and who governs its behaviour. Taken together, they show whether the enterprise truly controls the intelligence operating within its workflows, or whether that control lies elsewhere.

The value of the map is not in documenting every AI component across the organization. It is in exposing hidden dependency in places that matter. Applied to a single critical workflow, it can quickly reveal where external control exists, where governance is weak, and where accountability and authority are out of step.

Source: HFS Research, 2026

If you can’t see who controls the models and the decision logic behind the AI systems, then it is already carrying more risk than you think. Make that control visible now before this dependency becomes irreversible.

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

Register now for immediate access of HFS' research, data and forward looking trends.

Get Started