You are being asked to “scale AI,” but your enterprise isn’t built for it. As a CIO, you’re on the hook to somehow corral the sprawl of data, fragmented systems, and inconsistent definitions of customers into something coherent enough for AI to support end-to-end value, something you can hang your ROI hat on.

The newly announced partnership between Rackspace Technology and Palantir is designed to capture the context you need to deliver that coherence and accelerate your enterprise to secure, scalable AI-powered outcomes.

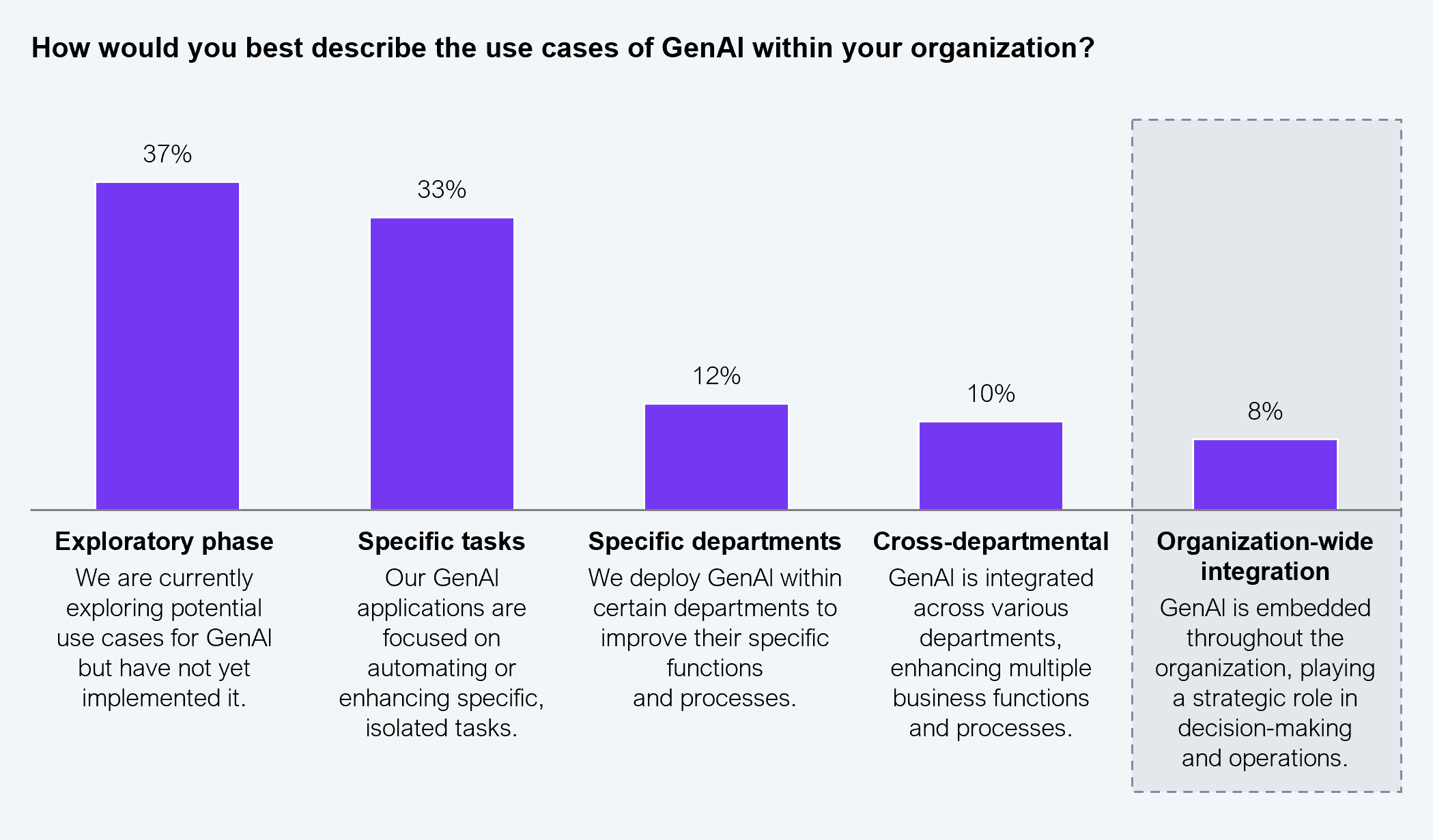

It’s time to move on from the boutique builds that led to many firms falling into the POC and pilot trap. Around 88% of enterprises remain stuck in that slow lane; many are struggling with deployment, fragmented data, limited talent, siloed business functions, and inadequate governance (HFS POV – Crack the AI maturity code). Only 8% have achieved organization-wide GenAI integration (see Exhibit 1).

Sample: N=553 G2000 decision makers

Source: HFS Research, 2026

The missing link is enterprise context, grounding AI in the definitions your firm lives and breathes and supporting an accelerated path to production pace. But unless you meet real-world, audit-heavy constraints with scalable and secure hybrid, private, and sovereign cloud approaches, you risk just running another experiment.

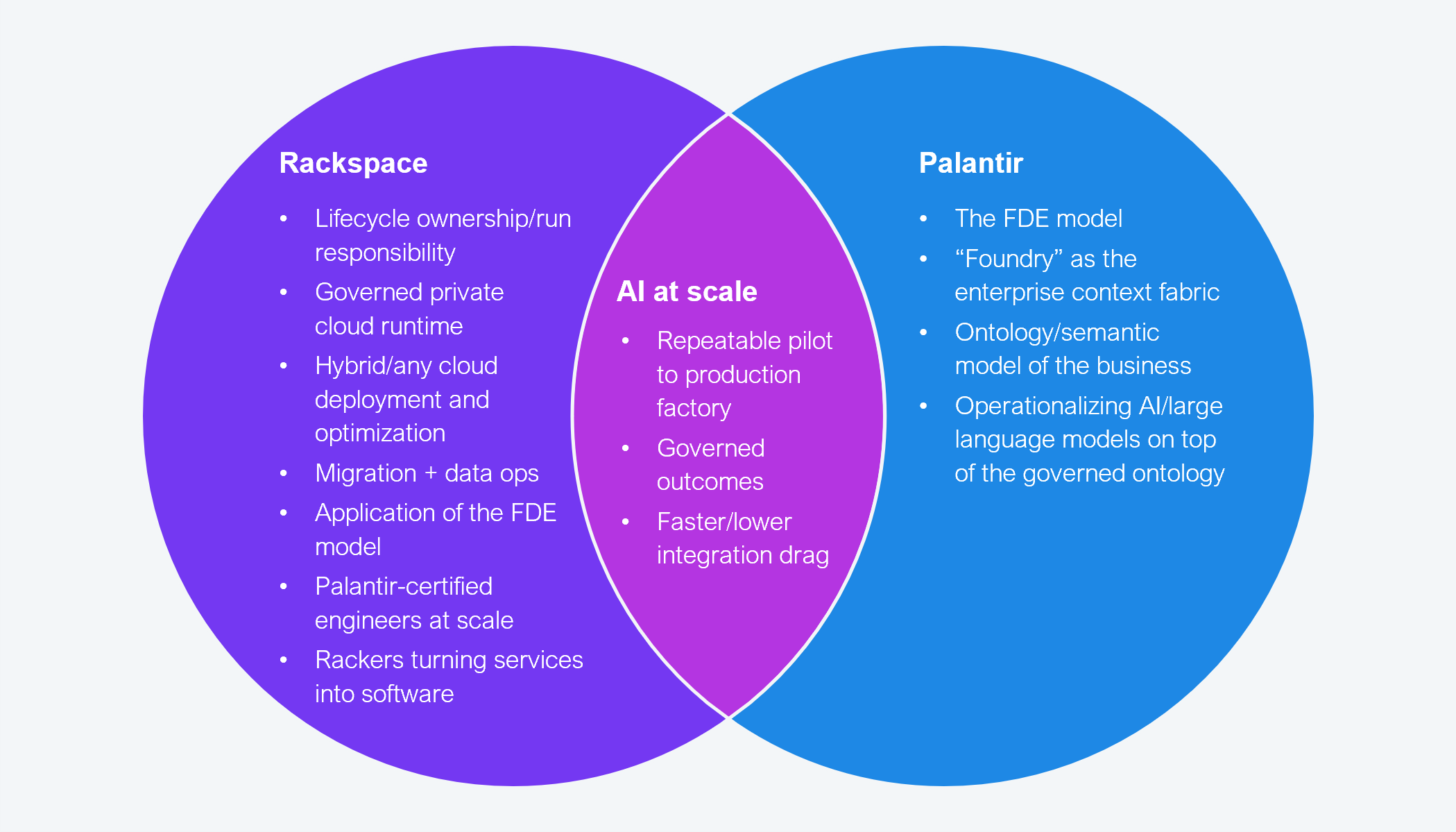

The Rackspace-Palantir proposition packages the two elements together: (i) Palantir supplies the context fabric (through their ontology and operational AI) and (ii) Rackspace offers industry-aligned implementation and systems engineering capabilities. The combination targets measurable AI value at scale beyond proofs of concept (POCs).

What you get is a semantic/ontology layer that models the business (customers, orders, products, tickets, shipments, and relationships), so that AI and analytics can operate on business objects. The delivery model kicks off with a five-day boot camp to build a working minimum viable product (MVP) for one target use case, which is then carried through to production and ongoing optimization. Rackspace promises an operating stance that deploys across the hybrid landscape, optimizes for cost, performance, and governance, and owns operational outcomes.

Source: HFS Research, 2026

Palantir is a name echoing around many boardrooms right now. It’s become something of a poster child for successful, trusted, scaled AI implementations. But there is a bottleneck. Its forward-deployed engineer (FDE) model has only so much capacity. Rackspace already has 30 trained FDEs and is on track to train another 250 over the next 12 months.

The partnership promises shorter delivery timelines by combining the FDE model with AI tooling, “AI Rackers” (AI agents trained on Rackspace’s delivery methodology), and Palantir patterns to accelerate code generation, documentation, and testing, straight out of the HFS Services-as-Software™ playbook.

Palantir comes with a reputation for high pricing. Rackspace claims its Services-as-Software approach will support the advantages of speed to market and cost effectiveness. But CIOs would be wise to seek some controls. Unless the ontology scope is bounded and the operating model is repeatable, transformation could become an expensive forever program. Ask whether there is a minimum viable ontology to support the first use case and what can be realistically achieved in 60–90 days.

You should also seek clarity on who funds which elements (such as the initial bootcamp), what triggers follow-on commitments, and how much of the pricing is outcome based. Finally, you must define what artifacts are yours to own (such as the ontology model, pipelines, and tests) and what is the exit route, if necessary, in 24–36 months. This is the age of impermanence when it comes to tech solutions.

This is not a test. You’ve had enough of those. This is a promise of scaled productionized AI with hard ROI attached. That’s not to say you go all in or go home. Start with one cross-functional use case for the five-day MVP offer to prove both value and effectiveness across business silos. And then hold the vendors to account for hard KPIs, production service-level objectives, and evidence of delivery efficiency ramped by “Rackers.”

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

Register now for immediate access of HFS' research, data and forward looking trends.

Get Started