This HFS Highlight is for CIOs, CTOs, and risk and governance leaders moving enterprise AI from model selection to the operating layer, agentic workforce, and runtime governance.

MIT Technology Review’s EmTech, held on April 21–23 at the MIT Media Lab in Cambridge, Massachusetts, brought together the people building the next phase of enterprise AI. Across keynotes and panels, one observation kept surfacing: the operating layer of enterprise AI, long overshadowed by the headline capabilities of foundation models, is finally being built. The companies on stage made clear that this is where competitive advantage is being decided in 2026.

For CIOs, CTOs, and risk and governance leaders who spent the past year on model selection, security debates, and pilot reviews, this shift is significant. The conversation has moved past whether AI works to how it gets embedded into operations, where governance lives, and what an agentic workforce actually looks like in an organization chart. The implications cut directly into how enterprise leaders should be spending their time and budget right now.

For two years, the enterprise AI conversation has been organized around model selection: which one to license, which one to pilot, and which one to scale. EmTech 2026 made it clear that the work has moved one layer down. The companies on stage were not pitching foundation models; they were unveiling the production infrastructure underneath.

That production infrastructure now has a concrete shape. That means the control towers monitor hundreds of production agents, runtimes separate reasoning from action, ontology compilers turn intent into code, data fabrics enforce semantic layers and role-based access, and orchestration engines hold agentic workflows across hours and days. These are categories of software being deployed in enterprises today to solve problems that foundation models alone do not address.

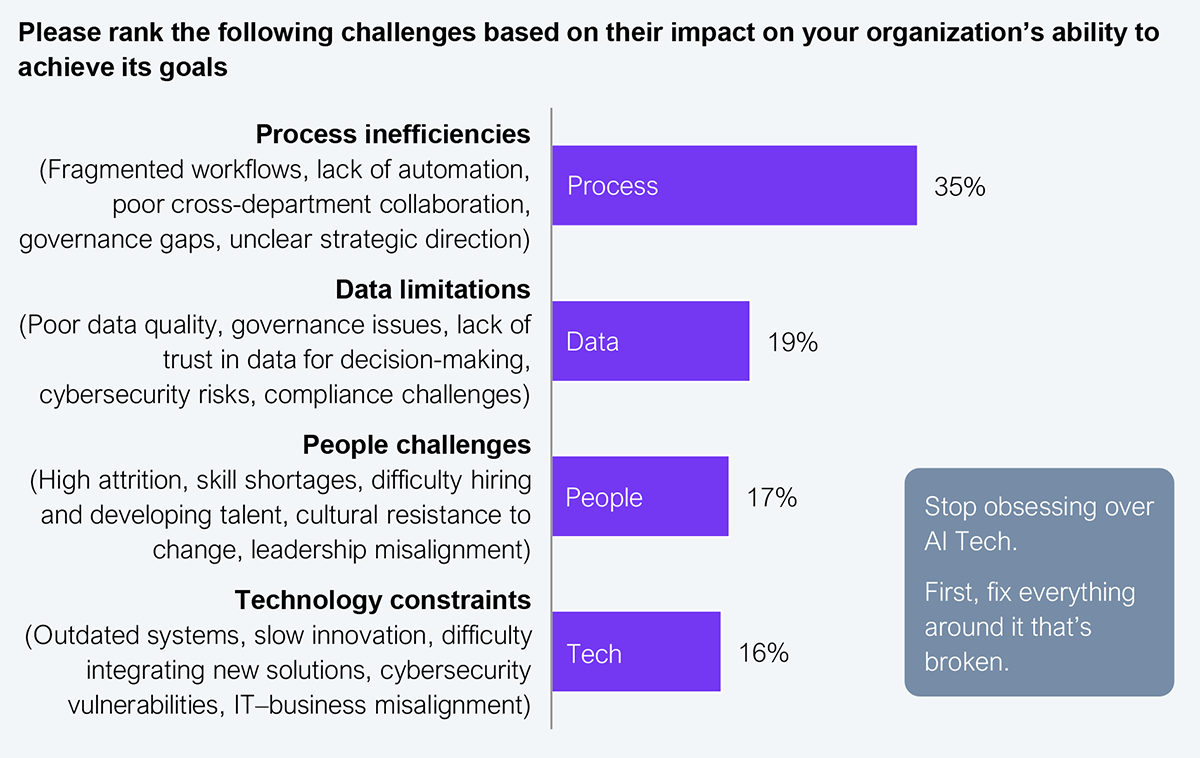

Two market trends confirm that shift. First, frontier AI labs are staffing for deployment, not just model research, with Poolside’s forward-deployed engineering function showing where talent and capital are flowing. Second, HFS’ data shows that enterprise leaders rank technology constraints last among the barriers to achieving their goals, behind process, data, and people challenges (see Exhibit 1).

Sample: 305 major enterprise decision makers

Source: HFS Research Pulse, 2025

For enterprise leaders, that means time and budget should flow toward the operating layer, not another round of model evaluation.

Agents used to be chatbots you asked questions, but now they are employees you assign work to. Every agentic deployment story on stage included the same ingredients an organization chart traditionally reserves for humans: an identity, access controls, performance metrics, and a specific slice of the business to own.

The services that enterprises have paid for decades are being productized into software, and the shift shows up across functions that leaders used to consider solidly human. In sales, Gong.io’s CEO talked about how agents remote-control customer relationship management (CRM) and take over the 77% of seller time, historically accepted as administrative overhead. In customer support and marketing, Klaviyo showed how a single customer-facing agent personalizes the conversation and closes the sale unless an edge case forces a handoff. In engineering, G5 Labs demonstrated how ontologies compile intent into code at scale within a full commercial CRM in months.

This is what Services-as-Software™ looks like when it runs inside enterprises. The unit of delivery is no longer a headcount or a contract. It is an agent with a job description and a line on the organization chart. For enterprise leaders, the question is not whether to add agents to the workforce, but rather which workflows go first.

Deciding which workflows go first is fundamentally a governance question. For years, most enterprises have treated governance as the brake on AI, with pilots stalling in review queues and frameworks debated for quarters. EmTech 2026 showed the flip.

In a keynote on building an agentic workforce, ServiceNow’s CIO presented its control tower, which monitors more than 300 agents that generate over half a billion in value, and an architectural review board that enables employees to build within guardrails. The companies running agents successfully in production were the ones with tighter, more automated, runtime-embedded governance. Strong governance was the reason they could realize value, not the constraint slowing them down.

The security side made the urgency concrete. According to Palo Alto Networks Unit 42, attackers now exploit publicly disclosed vulnerabilities within 15 minutes, and cyber-focused frontier models are doing a year of penetration testing in three weeks. Scheduled patching is obsolete. AI-speed attacks require AI-speed defense, and “fight AI with AI” has become a competitive requirement, not a research theme.

The data side reinforced that point. AI scales whatever chaos already exists in your data, and if your definitions conflict, your permissions are inconsistent, or your semantic layer is missing, agents will industrialize that mess across the enterprise in weeks. Governance-as-compliance slows pilots down. Governance-as-runtime lets enterprises ship at the speed agents demand. For enterprise leaders, the work is to embed governance into the runtime, not bolt another review process on top.

For enterprise leaders, this is an operating model decision, not a tools decision. The operating layer, the agentic workforce, and runtime governance are aspects of one transformation, not three initiatives to sequence separately. Enterprises that treat them as a single redesign will pull ahead. Those who fragment them will spend the next year watching pilots stall.

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

With the exception of our Horizons reports, most of our research is available for free on our website. Sign up for a free account and start realizing the power of insights now.

Our premium subscription gives enterprise clients access to our complete library of proprietary research, direct access to our industry analysts, and other benefits.

Contact us at [email protected] for more information on premium access.

If you are looking for help getting in touch with someone from HFS, please click the chat button to the bottom right of your screen to start a conversation with a member of our team.