BFSI firms are not failing to scale AI because the technology is “not ready.” They are failing because they are trying to bolt probabilistic systems onto operating models built for deterministic work, fragmented ownership, and legacy constraints.

The roundtable with BFSI executives, conducted in partnership with Sutherland, surfaced a common pattern: pilots look promising, but enterprise scaling runs into a wall of process, technology, data and people debt, and governance and ROI ambiguity, derailing the best plans. BFSI CIOs must stop pumping money into more POCs and instead focus on redesigning decision rights, workflow ownership, evaluation, and controls so AI can safely run as part of the workforce.

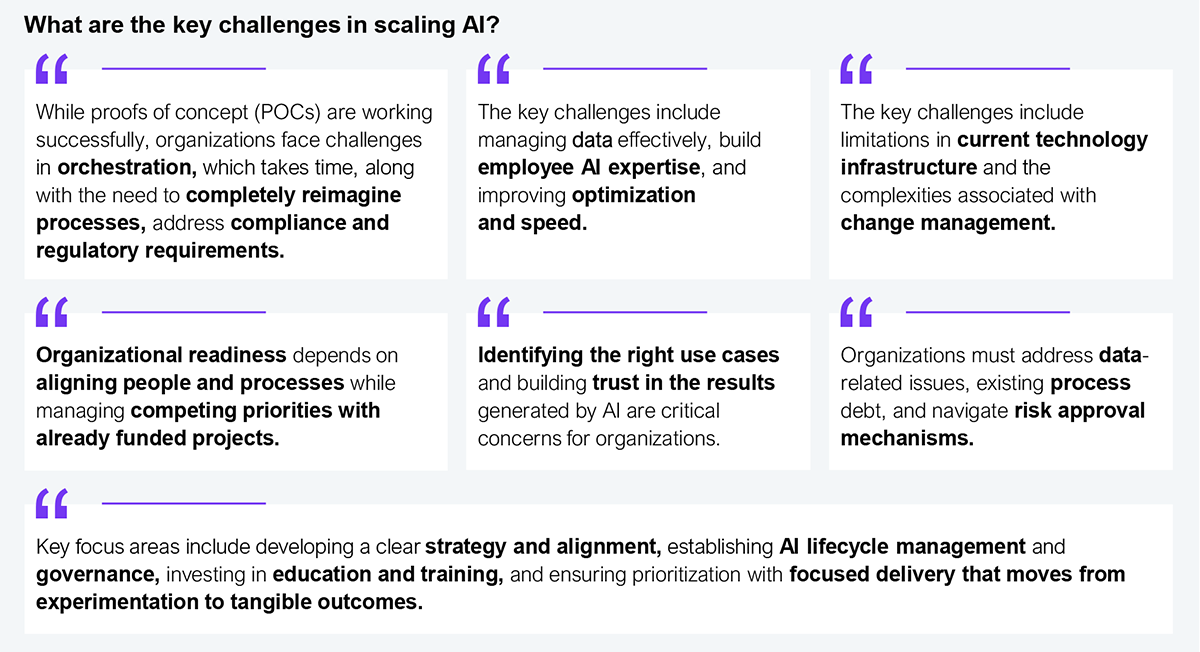

BFSI CIOs have moved beyond debating whether AI works and are now wrestling with how to make it repeatable, governable, and value-producing at enterprise scale. Participants in the roundtable described a reality where AI has become a catch-all label, much like digital transformation previously, creating confusion, scattered investments, and misaligned expectations across functions (see Exhibit 1).

Sample: n=12 delegates

Source: HFS Research, 2026

A BFSI executive compared AI today to owning a garage full of high-performance cars where the promise of the powerful engines is real, but they don’t have the “AI interstate system” needed to operate them at full power. Simultaneously, regulatory pressures and customer intolerance for opaque risk further constrain funding on indefinite experimentation without proof of value.

Another participant captured the core dilemma: there is “proof of concept” and “proof of promise,” but not enough “proof of value,” leading to “death by 1,000 POCs” as experiments proliferate without convergence. Building the path for AI at scale in BFSI is a collective responsibility. Regulators must create clearer lanes for responsible innovation, enterprises must redesign operating models, and providers must deliver production-grade, governed outcomes.

Leaders at the roundtable described AI as a powerful engine being placed into a system without the “highways” needed to use it effectively because BFSI organizations are structurally optimized for stewardship and risk control, not rapid redesign of how work gets done.

Three failure modes kept recurring:

BFSI firms can’t scale AI by doing more pilots. They must scale by making a small set of practices repeatable across value streams. The leaders who reported making meaningful progress are treating AI as an operating capability, with common building blocks that can be reused. They emphasized redesigned workflows, clean data definitions, fit-for-purpose architecture, risk-tiered governance, and outcome instrumentation as key to standardize “how” AI gets taken from idea through production to monitored run-state. Participants pointed to the following best practices as the difference between isolated wins and enterprise-scale adoption:

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

With the exception of our Horizons reports, most of our research is available for free on our website. Sign up for a free account and start realizing the power of insights now.

Our premium subscription gives enterprise clients access to our complete library of proprietary research, direct access to our industry analysts, and other benefits.

Contact us at [email protected] for more information on premium access.

If you are looking for help getting in touch with someone from HFS, please click the chat button to the bottom right of your screen to start a conversation with a member of our team.