COOs, CIOs, and transformation leaders are pushing agentic AI into processes that still depend on people to patch the gaps. What looks like a stable workflow is often held together by undocumented decisions, informal workarounds, and inconsistent exception handling. That gap between how work is defined and how it actually runs is process debt.

For years, teams have been absorbing process debt, interpreting context, making trade-offs, and keeping execution moving when systems fall short. Agentic AI will not. It exposes those gaps and scales them. If automation continues without fixing the underlying decision logic, execution will move faster, but outcomes won’t improve.

Many believe they understand the processes because they can map the workflow, document the steps, and point to the systems involved. But what they usually see is only the visible layer of execution.

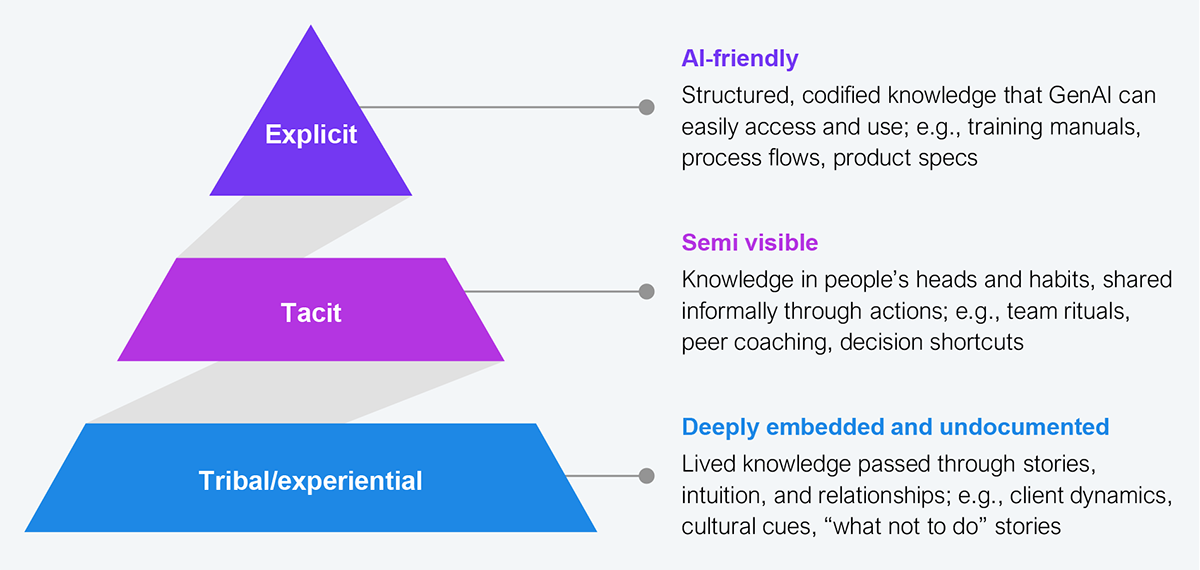

Enterprise knowledge sits across three layers (see Exhibit 1):

Source: HFS Research, 2026

Most automation efforts focus on the explicit layer because it is visible, structured, and easier to codify. But the decisions that actually shape outcomes, prioritization, exception handling, escalation, and trade-offs are often driven by tacit and tribal knowledge, determining whether work flows smoothly or breaks down under pressure.

So the core issue is that enterprises are not automating processes, but rather incomplete representations of reality. That is how process debt builds. The workflow looks complete, but the logic that drives execution remains in people.

For years, enterprises have been optimizing processes for human execution. Workflows were often under-specified in terms of decision logic, exception handling, and handoffs because people could interpret context, manage exceptions, and make trade-offs in real time.

That design assumption created process debt, the accumulated operational cost of undocumented decision logic and exception handling. It rarely appears as a system failure. Instead, it shows up as workarounds, manual overrides, inconsistent decisions, and dependence on experienced employees.

On paper, the process looks complete. In practice, it only works because people fill in what the system never captured. Agentic AI removes that buffer.

Previous waves of automation could operate within imperfect processes because humans remained in the loop. Robotic process automation (RPA), workflows, and even GenAI copilots relied on people to interpret context, resolve exceptions, and fill in missing logic when processes broke down.

Agentic AI is changing that equation by making decisions and executing without constant human intervention. However, that requires processes to be complete, explicit, and executable. When decision logic is missing, exception paths are undefined, and handoffs rely on human judgment, agentic systems do not correct the process; they scale its weaknesses.

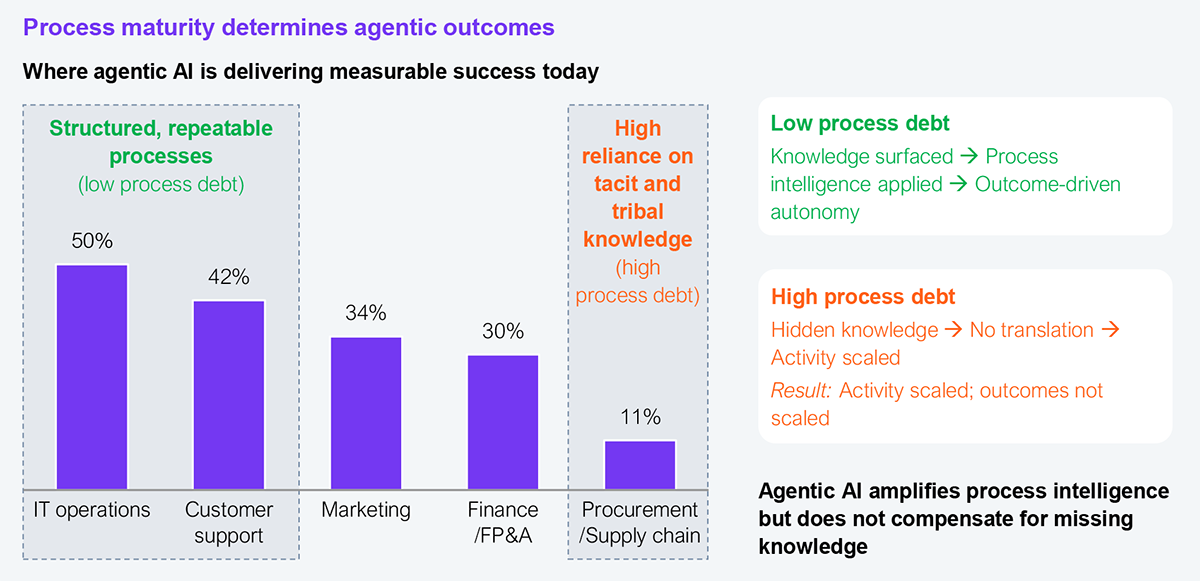

This pattern of agentic AI succeeding in some areas and stalling in others is already visible (see Exhibit 2). In low process debt environments, where knowledge has been surfaced and translated into executable logic, agentic AI drives outcomes. In high process debt environments, hidden knowledge remains embedded in people, so activity scales, but outcomes do not.

This divide is also reflected across functions. Areas such as IT operations and customer support show stronger results, where decision paths are more structured. Functions such as procurement and finance, where execution still depends heavily on undocumented judgment, are lagging.

Source: HFS Research, 2026

Agentic AI is not an efficiency tool, but a stress test of how well a process has been engineered. So before scaling it, enterprises must make decision logic visible, governed, and executable. Without this, they are not scaling intelligence; they are scaling process debt.

The starting point is not enterprise-wide transformation. It is identifying the workflows where decisions and exceptions drive outcomes, the processes that appear stable but depend heavily on human judgment to function.

A simple way to assess this is to ask three questions about any process being targeted for agentic AI:

If the answer to any of these is no, the process is not ready for autonomy. From there, three actions are non-negotiable:

This is not about documenting processes more thoroughly. It is about engineering how decisions are made so systems can execute them without human intervention. Enterprises that do this will use agentic AI to drive consistent, scalable outcomes. Those that do not will move faster, but only scale inconsistency.

Decades of unmanaged tacit and tribal knowledge have created process debt that remained hidden in human execution. Autonomy brings that debt to the surface. For COOs, CIOs, and transformation leaders, the priority now is to shift from scaling automation to stabilizing how work gets done.

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

With the exception of our Horizons reports, most of our research is available for free on our website. Sign up for a free account and start realizing the power of insights now.

Our premium subscription gives enterprise clients access to our complete library of proprietary research, direct access to our industry analysts, and other benefits.

Contact us at [email protected] for more information on premium access.

If you are looking for help getting in touch with someone from HFS, please click the chat button to the bottom right of your screen to start a conversation with a member of our team.