The quarterly strategy session sounds like a chatbot wrote it. Performance reviews from the freshly updated HR system are cleanly written and all cluster on the same strengths and weaknesses, showing every tell from the “Top 10 things that make writing look AI-generated” lists. Nobody flinches at the uncanny valley of almost-human content. Speed gets mistaken for thought. Polish gets mistaken for judgment.

Previous waves of automation took over tasks, but left judgment largely with humans. Tasks happened more efficiently, but there was still an additive human in the loop. The worm has turned. Most leaders worrying about AI today focus on over-reliance, concerned that employees trust outputs they should have questioned. That concern is valid, but it’s also narrow.

AI is the first automation wave effectively removing humans from the loop. It’s extractive. It’s not just displacing jobs but quietly redesigning how work gets done, asking less and less of the workforce. This is a structural shift where the workplace is being redesigned to require less human judgment, and judgment weakens when it is no longer required.

The CHRO mandate is now to build cognitive resilience into the workforce, maintaining a workforce’s capability to continue thinking effectively even when AI is producing a significant portion of the work. Examples of cognitive resilience include framing problems, exercising judgment, and challenging AI-generated output. You can’t train cognitive resilience; you have to design it into how work, performance, and leadership expectations operate across the business.

The operating model decisions made during AI rollout were optimized for speed, fewer handoffs, and lower cost. Rational, on those terms. But they carried the unstated assumption that human judgment would keep filling the gaps AI left behind, and it hasn’t. Judgment weakens when it is no longer required, and the new workflows no longer require it. People are being asked to review, approve, and move on rather than to think.

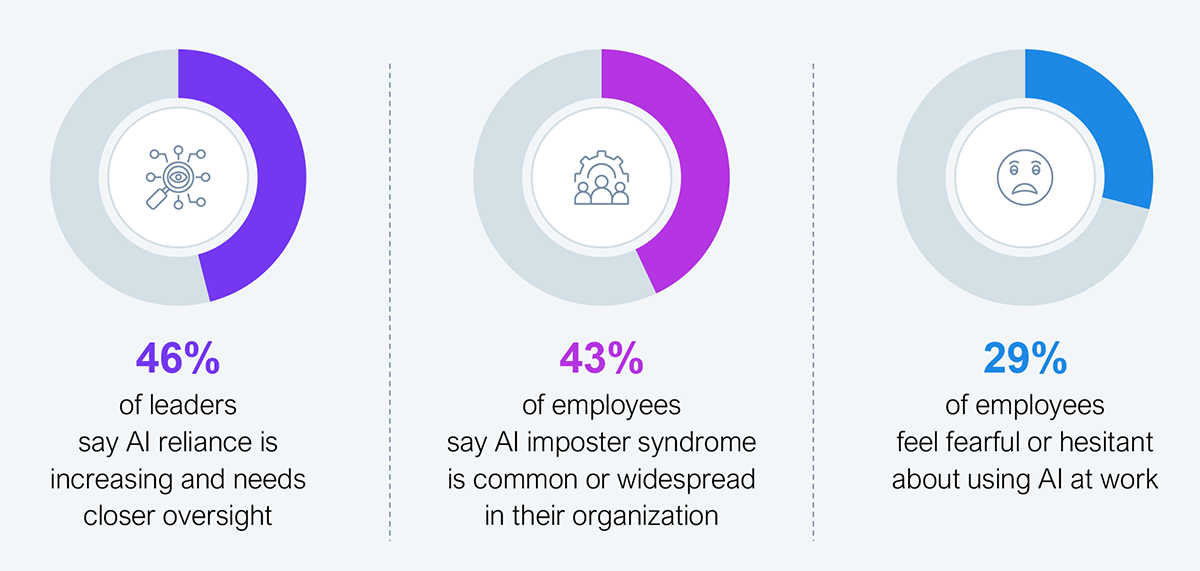

In our recent study, two populations looking at the same workplace from opposite ends reach the same conclusion. Almost half (46%) of leaders say reliance on AI is increasing beyond comfortable levels, and nearly the same share (43%) of employees call AI-related self-doubt common or widespread. Almost a third are fearful or hesitant about using AI at work (see Exhibit 1).

One group defers to the machine. The other no longer trusts itself alongside it. Both signal the same failure: the organization has stopped building human judgment and started designing it out.

The speed of AI-assisted work compounds the problem. People don’t have time to check sources. They don’t have time to question whether the data makes sense. They don’t have time to notice when the machine confidently got it wrong. This is automation bias with a tailwind.

Sample: 505 Global 2000 leaders

Source: HFS Research, 2026

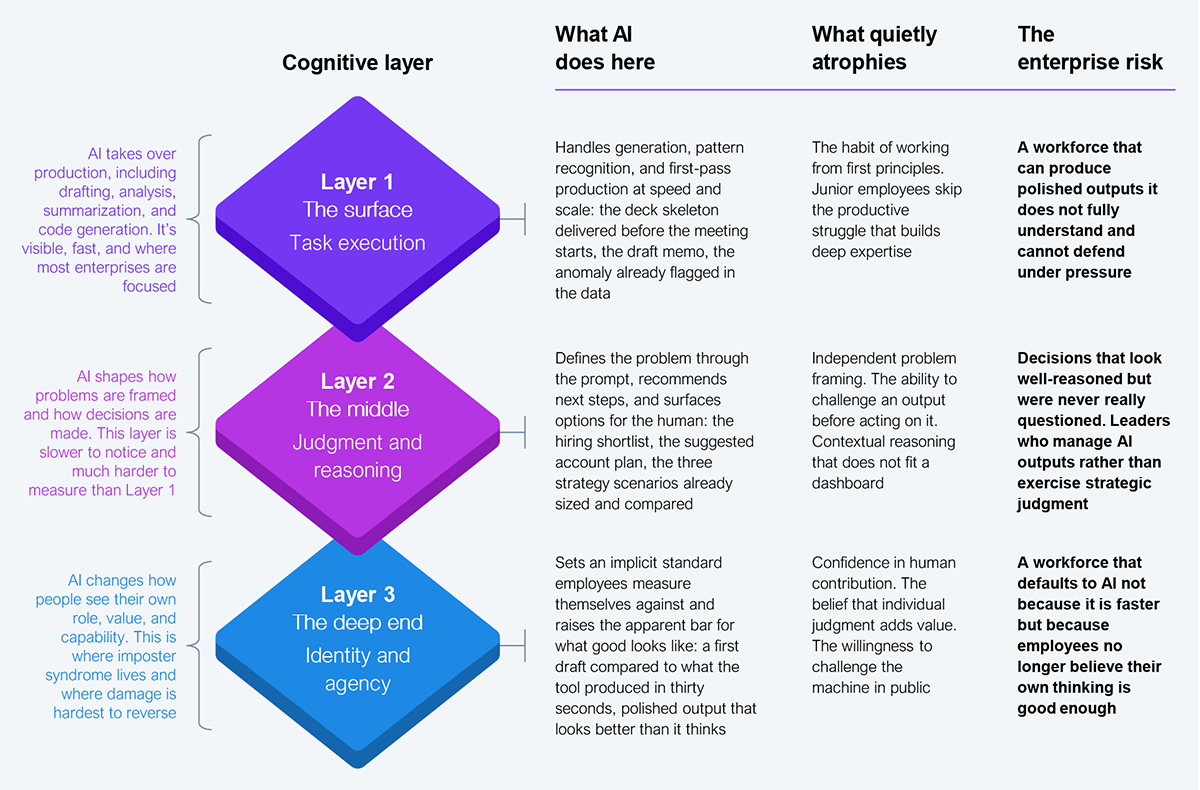

Here is what makes cognitive atrophy hard to see. It doesn’t weaken every capability at once. It moves through layers, and each layer is harder to measure than the one above it. Task execution sits on top. That’s the visible layer, the one the dashboards track. Below that is judgment and reasoning, slower to erode and much more consequential when they do. The deepest is identity and agency, where people quietly stop trusting their own thinking enough to challenge the machine (see Exhibit 2).

Most enterprise attention is still on Layer 1, adoption rates, output volumes, production speed. All tracked, all reported, and all missing the point. Layers 2 and 3 are where people learn whether their job is to think or just to process what the system gives them. Left unmanaged, that quiet demotion doesn’t preserve capability. It erodes it.

Source: HFS Research, 2026

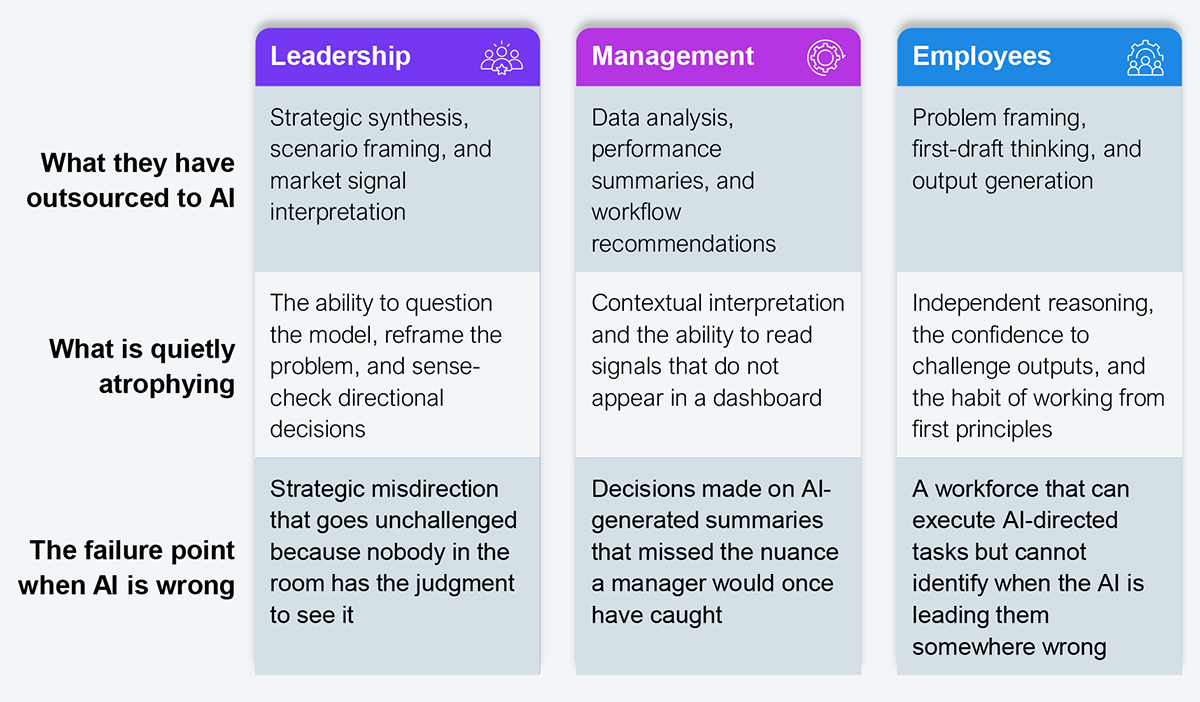

The pattern runs through the talent pipeline. Leaders are letting AI summarize strategy for them. Managers are swapping judgment for dashboard recommendations. Employees are handing their first draft, and their first thought, to the tool. At every level, a different cognitive capability is being handed off to the machine.

Each shift looks like an efficiency gain. Together, they produce an organization that moves faster but thinks less. That’s a workforce design failure, and workforce design sits with the CHRO. The real risk isn’t that AI replaces the roles being hired for. It’s that the people in those roles no longer have the judgment to recognize when AI is leading them off course (see Exhibit 3).

Source: HFS Research, 2026

Cognitive resilience isn’t a training problem. You don’t fix it with another AI module. You build it by redesigning how work, performance, and leadership expectations operate across the business.

To do that, CHROs must:

That’s the CHRO mandate now. Not to help the workforce adopt AI faster, but to make sure the workforce still knows how to think when AI is in the room. Because once judgment disappears from the way work gets done, it will eventually disappear from the people doing it.

So, here’s the real question. It isn’t about the workforce. It’s about the person accountable for how that workforce thinks. When was the last time you made a talent decision, a promotion call, or a reorganization that the machine didn’t frame for you? If the answer doesn’t come quickly, the cognitive atrophy this paper describes isn’t just in the workforce. It’s in the office of the person reading it.

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

Register now for immediate access of HFS' research, data and forward looking trends.

Get Started