Buyers are clear in that they don’t buy RPA, AI, or blockchain off the shelf but, and they instead aim to procure an outcome. Therefore, the industry has to move beyond technology-centric narratives and put the reimagination of processes center stage. To accelerate this journey, the discussions need to be more differentiated and nuanced. Otherwise, we will repeat the mistakes from the early RPA days, where the discussions focused on notions of RPA being a silver bullet, non-invasive and with the main purpose of replacing FTEs. In order to help overcome the confusion of many stakeholders around the broad notion of AI, we should start with one thing: Be specific and transparent about outcomes and use cases. To do that we need to acknowledge the disparate starting points and market segments.

Artificial Intelligence (AI) is many things: It is hyped and undefined, yet it is becoming pervasive and fostering emotional and, at times, heated discussions. Therefore, it is hardly surprising when organizations and buyers are massively confused about it. The research for our inaugural Enterprise AI Blueprint is reinforcing these views as almost all the providers and buyers share this observation. As my esteemed colleague Phil has rightly highlighted, we are confusing the market even more by discussing the issues between two extremes: On one hand we have low-level RPA deployments and on the other hand is the benchmark of Ray Kurzweil’s notion of the Singularity. This notion not only suggests that AI will surpass a valid Turing test and therefore achieve human levels of intelligence, but also that when it does, we will multiply our effective intelligence a billion-fold by merging with the intelligence we have created. If we want to avoid a repeat of the times when the supply side confused the hell out the industry by the way it discussed RPA, we should take a step back and be clear about what we are trying to analyze and compare.

Not all AI is equal: stakeholders have to be clearer about the use cases for AI

The confusion by stakeholders gets compounded by the challenge to understand and analyze the myriad of AI startups entering the market. All too often we get pointed to vendor landscapes that depict tons of AI startups. Most buyers and stakeholders have neither the time nor the interest to understand the value propositions of those players. This gets even more complicated as the mega ISVs come the fore, either with dedicated algorithms and offerings or broader platforms with integrated AI capabilities. Consequently, many stakeholders struggle to steer through this noise and fuzziness. Some service providers are trying to add more clarity by aligning offerings to generic activities. For instance, Capgemini is talking about five senses of Intelligent Automation:

Listen, talk, interact: Listening, reading, talking, writing, and responding to a user of an Intelligent Automation solution;

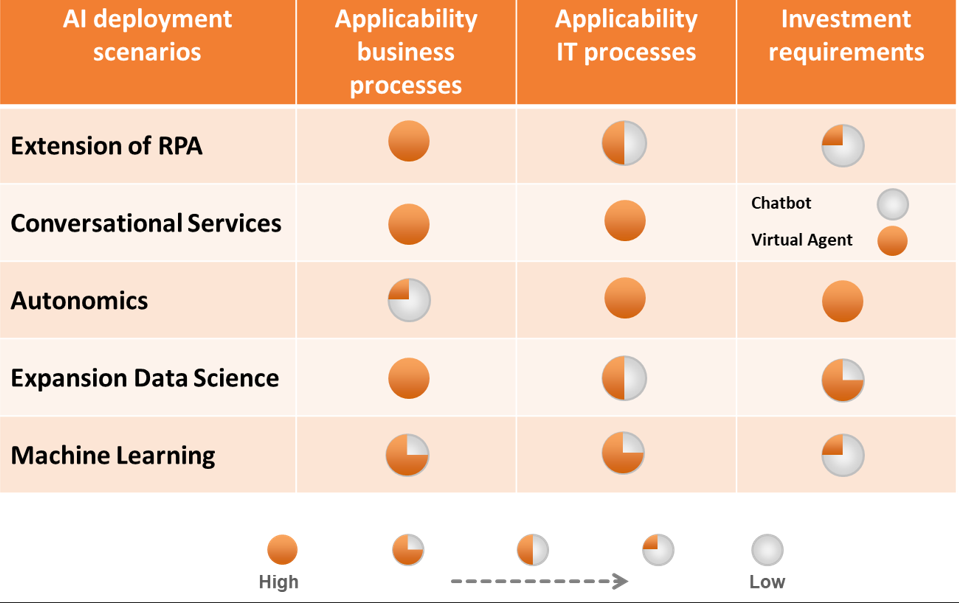

Similarly, KPMG suggests that most enterprise decision making is some combination of the following cognitive automation patterns: extract, compare, clarify, retrieve, and discover. Both approaches are helpful to make AI and Intelligent Automation more accessible. Yet, we need more clarity on the starting points of AI discussions as well as the use cases following on from those discussions. One way to provide more clarity is to cluster all those approaches. As highlighted in Exhibit 1, we are seeing at least five different starting points for AI projects. Suffice it to say there are many others, not least with a high specific vertical context, but this approach of clustering might help to convey the fundamental differences between those five clusters. RPA and chatbots are low level; compare those with the expansion of data science projects, autonomics, or even virtual agents, which have a high complexity and require significant investments. For many buyers, all those chatbot PoCs are a risk-free toe-dip into the sea of AI with the hope to learn some valuable lessons. However, how do you compare this to the Watson projects costing millions trying to cure world hunger?

Exhibit 1: The Journey Toward AI Has Disparate Starting Points

Source: HfS Research 2017

The evolution of RPA and the expansion of data science projects are both business process-centric yet with wildly different barriers to entry and investment requirements. In contrast, autonomics are all about IT-centric scenarios. At the same time, the integration of machine learning capabilities is becoming ubiquitous with platform plays such as Salesforce’s Einstein or SAP’s Leonardo becoming reference points. Yet, it is only when we compare apples with apples that we might arrive at meaningful ways of assessing the market. With the same token, the criteria of investment requirements and barriers of entry provide some guidance on the market dynamics. Most stakeholders we spoke to don’t expect the plethora of providers depicted on all those vendor landscapes to become a cornerstone of new ecosystems. The reason for this expectation is the sheer level of R&D investments needed. Consequently, these providers should be assessed from a capabilities perspective rather than an expectation to find the next unicorn.

Key lessons learned from early AI deployments

In view of this heterogeneity, are there ways to call out common themes? Recently at KPMG’s Executive Symposium on Intelligent Automation in Jacksonville, HfS had the opportunity to listen to and discuss many of the issues we have raised. Despite a high quality in all the presentations and the ensuing discussions, the confusion around AI was tangible. Traci Gusher, KPMG Principal for Data, Analytics, and AI cut through this fog by summarizing the discussions in Florida with the following observations on AI:

It’s about service orchestration, stupid!

What this all boils down to is that transforming service delivery is not about individual technologies or approaches but all about service orchestration. It is about blending technology innovations and data. It is about finding as much commonality as possible across delivery backbones. Yet, as KPMG’s Traci Gusher rightly has pointed out, you can’t apply the plug-and-play mindset of RPA and Intelligent Automation one-to-one to AI. But you can think of repurposing data sets and leveraging process steps in knowledge repositories. Only for expanding lower level RPA to AI with the integration of Google or Microsoft libraries, the notion of plug-and-play makes some kind of sense. This throws us back to the many discussions on the HfS Intelligent Automation Continuum that we had over the years. Because the main thought process behind the continuum is that all the approaches depicted on that continuum are both interdependent as well as overlapping, the continuum does not imply a linear progression from RPA to notions of cognitive, AI, and beyond. It is at this point where many RPA discussions fade into fuzziness. While there is a linear progression of RPA tool sets toward notions of operational analytics and AI, there is no linear progression for other scenarios. To reinforce this distinction, HfS did introduce the Triple A Trifecta to emphasize the non-linear evolution of Intelligent Automation. In conclusion, the necessity to approach digital transformation and the journey toward the OneOffice with a mindset of service orchestration is more important than ever before. Yet, the mindboggling rise of AI is challenging many established thought patterns and over simplifying arguments on automation. To avoid the mistakes of the early RPA days, we urgently need a more nuanced discussion on the application of AI in service delivery.

Bottom-line: We need to learn from the mistakes of the early RPA discussions and focus on outcomes and use cases for AI

Buyers are clear in that they don’t buy RPA, AI, or blockchain off the shelf but, and they instead aim to procure an outcome. Therefore, the industry has to move beyond technology-centric narratives and put the reimagination of processes center stage. To accelerate this journey, the discussions need to be more differentiated and nuanced. Otherwise, we will repeat the mistakes from the early RPA days, where the discussions focused on notions of RPA being a silver bullet, non-invasive and with the main purpose of replacing FTEs. In order to help overcome the confusion of many stakeholders around the broad notion of AI, we should start with one thing: Be specific and transparent about outcomes and use cases. To do that we need to acknowledge the disparate starting points and market segments. For more details on all the issues raised, stay tuned for HfS’ inaugural Enterprise AI services Blueprint.

Register now for immediate access of HFS' research, data and forward looking trends.

Get StartedIf you don't have an account, Register here |

Register now for immediate access of HFS' research, data and forward looking trends.

Get Started